A few weeks ago, I asked ChatGPT to write an article and I have to say, it exceeded my expectations. Not only did ChatGPT write a comprehensive article, but it also included helpful headlines for each section. Since then, I’ve been thinking a lot about Artificial Intelligence (AI) and what it could mean for the future world.

I’ve been keeping up with news about AI developments, but ChatGPT really stood out to me. While there are other models out there doing similar things, there must be a reason why ChatGPT made such big headlines. I think it’s because people like me started using AI models for the first time with very tangible results. However, as useful and unique it can be, I do have some concerns.

The smaller problems

After receiving the article from ChatGPT, I requested another one using similar keywords. ChatGPT delivered, but the resulting article was 62% similar to the first one.

I doubt this would happen if I asked two people to write an article using the same keywords. As humans, we all have unique minds and experiences that shape our thoughts and words. Each person’s creativity is unique because it requires unique brain networks to fire simultaneously.

It’s no surprise that ChatGPT lacks originality, since it’s trained on millions of pieces of information from various sources. AI relies on pre-existing information to produce content. In contrast, humans learn in various ways and may draw different conclusions from similar experiences.

Another challenge with AI is bias. AI algorithms are only as good as the data they’re trained on, which can lead to biased results. Microsoft experienced this firsthand in 2016 when their AI chatbot on Twitter became racist and misogynistic within 24 hours.

Even OpenAI, the creator of ChatGPT, acknowledges the limitations of AI. They warn that it “may occasionally generate incorrect information,” “may occasionally produce harmful instructions or biased content,” and has “limited knowledge of the world and events after 2021.”

The last limitation is telling. Is it possible for an AI chatbot to “live in the present”? Today’s reality is so fragmented and dependent on individual perspectives that even humans struggle to discern the truth. How can scientists train an AI model to differentiate truth from falsehood? If this were possible (let alone easy), why haven’t humans mastered this ability yet?

The bigger problems

I asked ChatGPT about the jobs it can potentially replace, and here’s the response I received: “Customer service, writing and content creation, language translation, education and training, research and development (R&D).” What do these domains have in common? Maybe except R&D, these jobs are often low-paying, which means that many who work in these fields depend on the income to make ends meet.

Experts believe that AI may also impact some higher-paying jobs, such as software developers, data scientists, and financial analysts. It’s curious that ChatGPT didn’t mention these professions, and one can’t help but wonder if there’s an ulterior motive.

When it comes to content creation, I can see how ChatGPT could easily replace some jobs. In fact, Buzzfeed recently laid off 180 employees (12% of its workforce) in favor of using ChatGPT to generate content. While this may be a cost-effective solution for companies, it’s unfortunate for those who have lost their jobs. Personally, I’ve used a gig economy website in the past to find and pay a real person to edit my writing. But if ChatGPT can do the job for free, would I continue to pay someone else? Probably not.

The problem, however, is that many of the people I’ve paid for editing or other tasks live in economically disadvantaged areas. The small amount of money that each of us paid may have translated into a substantial amount in their local economy. With the rise of AI and automation, it’s unclear what will happen to these individuals and their communities.

Another major concern is fact-checking. “Bad actors use artificial intelligence to propagate falsehoods and upset elections, but the same tools can be repurposed to defend the truth.” However, the models that are developed to fact-check can still be influenced by the biases, beliefs, and shortcomings of the humans who create them. While AI may not necessarily make things worse, it’s unlikely to make them better either. It’s unrealistic to expect opposing countries to use the same AI models to fact-check when their interests depend on maintaining their own beliefs and the status quo.

But let’s assume for a moment that opposing countries did agree to use the same AI model. What happens if the model produces an unexpected result that upsets the geopolitical balance? Who takes responsibility for the AI output that causes harm? Aren’t the scientists behind an AI model human being can be bullied or even bought?

Have we lost our focus?

I wonder to what extent technological innovation occurs for the sake of innovation itself, rather than to address and solve a specific problem. Are we using AI to solve significant global issues, or are we merely using it to fix inconveniences?

Recently, I read the news about a robo-dog for people with visual impairment. Equipped with AI, it talks and aids them in navigating cities. Why were living service dogs not good enough? Apparently, they are expensive to train and maintain, so the answer technology offered was -what else?- robots. That would be quite reasonable, but 90% of vision loss is preventable or treatable with spectacles or eye surgery. Millions of people have visual impairment because they don’t have access to these treatments, making it a significant and global problem. Shouldn’t we use our resources to prevent and treat vision loss in the first place?

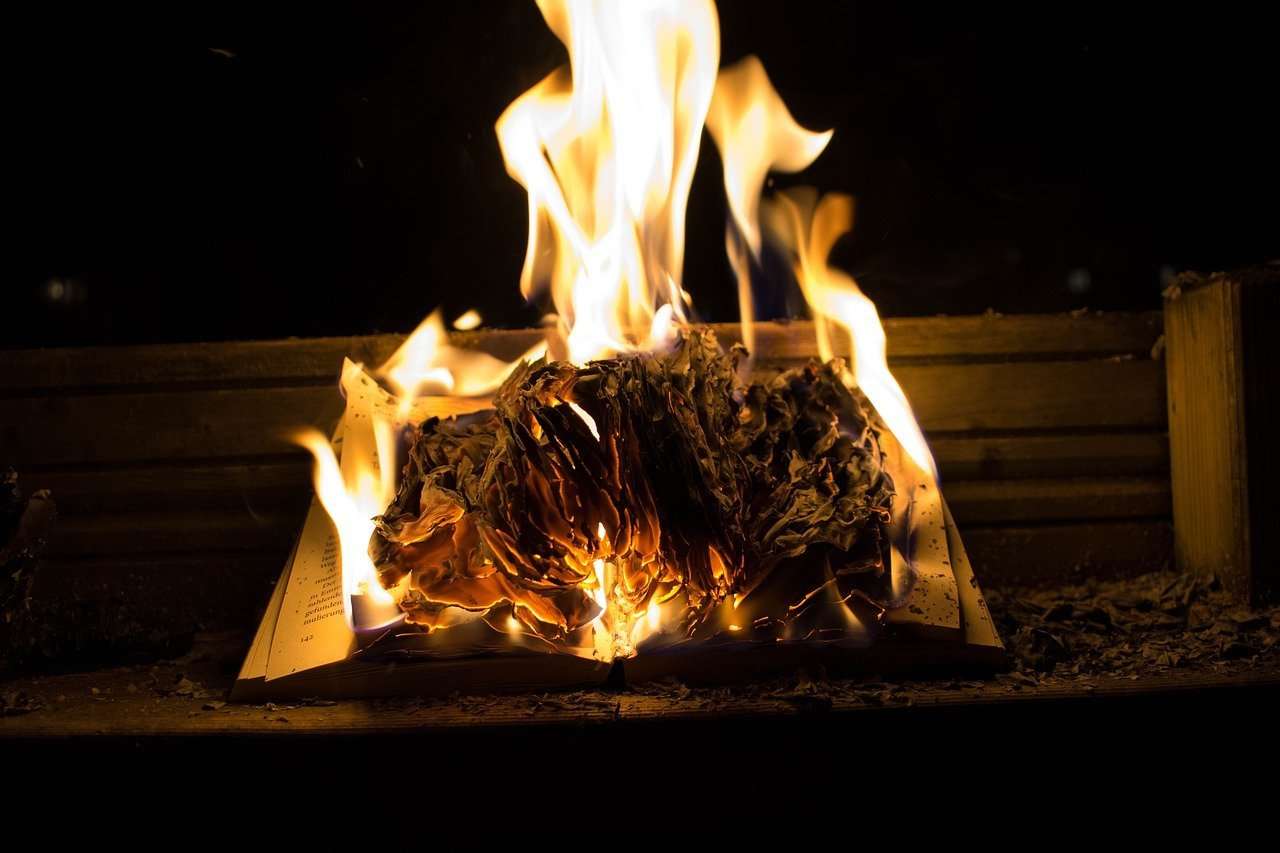

We have built a world so dependent on technology and so obsessed with growth that we are now willing to put a price on the only thing that makes us unique in this world: our brain. While we have not fully comprehended its capabilities, we are trying to make a digital copy of it.

Are we sure we know what this means?